Less than a year ago, I felt pessimistic about our chances of success in the fight to reduce emissions, halting and reversing climate issues. Events in the past year that have made me optimistic and while there is a long road ahead, I see many indications that provide renewed hope and optimism.

Weather-related events appear are worsening at an alarming rate with dramatic increases in fires, floods and high temperatures worse than in the past. While the current target is to achieve net-zero emissions, society must realize that net-zero is not a solution but is the point at which weather issues stop getting worse. At the present rate of progress, our climate issues will be far worse than they currently are when we reach net-zero emissions.

Amidst the gloom, however, we see action by governments and by individuals suggesting a growing recognition of this threat. In April of 2022, Elon Musk funded a $100 million XPrize for innovative solutions that will take carbon from the air and lock it away. Several startup companies are well underway with pilot projects aimed at capturing these prizes. Additionally, Tesla and Volkswagen are now among automakers that see plentiful sources of manganese that may make batteries and EVs affordable for mainstream buyers. An affordable EV may have a large impact on our future.

Germany has announced recently that it might defer the shutdown of their last few nuclear electricity generating stations. While this is driven by other events, it appears that they see a need to avoid the increased use of coal using nuclear generation—an emission-free source that was seen as a threat in the recent past.

Currently, there are advances on two distinct fronts: Public discussion on the clean energy sources that will be used to displace fossil fuels and technical advances in our electric grid that will deliver most of our energy. This supply of clean energy is hotly contested and is the subject of many discussions (some seem based on idealistic targets with little technical backing), while the advances in electric grid technology are understood by few and advance rapidly with almost no public discussion. It is in the latter area that companies such as Generac are active participants, developing and implementing many new concepts.

Following are a few of the concepts that may have a large impact on our future grid. Most of these have the potential to integrate distributed energy sources, improve efficiency and deliver more energy while maintaining power quality for users.

Innovation Could Substantially Reduce Loss

Based in Texas, Metox Technologies is the new company now home to Bud Vos, GGS’s former CEO. The company has developed a new form of conductor to deliver electric power. This company includes a clever bunch of physicists and appears to make conductors that have very low resistance and emit less EMF radiation. At present, the average loss between a generator and a home is about 6%-8%. While that may seem low, it may change dramatically. The existing grid design is based on delivering power at peak demand and needed on a very hot or a very cold day. The average load over a year is about 50% of this peak, so with the help of Generac Grid Services’ virtual power plant technology, the existing grid could deliver almost twice the energy that is currently delivered by using grid-edge storage and demand management. Loss increases with the square of the current, and if the current is doubled, as would occur if power is increased to run continuously at near maximum, the power delivered would double, but the total loss would be increased by four-fold. An 8% loss could easily become more than 30%. The concept of using HTS wire from Metox may have the potential to help to deliver the growing amount of electrical energy that will be needed.

Energy Storage Plus Generation Is a Hidden Goldmine for the Average Consumer

I recently priced a Generac home stand by generator system for my home. I required a 22 kVA generator to start my heat pump and AC unit, the freezers, and a few other items. However, the average capacity used by my home is less than 2 kW, so the generator needs to start some loads and would operate at less than 10% of its rated capacity most of the time. The new PowerGenerator that generates direct current power to charge a battery, and a battery inverter supplies the starting power for these appliances, dramatically improves average efficiency and provides several added benefits. The generator now needs to be only a fraction of the size of a conventional machine, and it operates ONLY when the battery is at a low level and requires charging. Efficiency and emissions could be greatly improved, and the generator operation at night would likely be minimal. Another rarely mentioned advantage, is that an inverter can be paralleled with the grid to provide support where a conventional single-phase generator cannot be directly connected to the grid. This will allow grid-support activities to become a significant revenue source for many homeowners.

The ability to perform many new and complex tasks has become a common reality through surprising technological innovation. The technology and computer power needed to manage these systems is developing at a remarkable rate. When I was a student studying engineering in the 1960s, I learned to program using an IBM 1620 computer—something we all considered to be a magic box with unbelievable computing power. When I heard recently that my iPhone had more than 10-times the computing power of the computer that served my entire campus I was shocked. A little after I graduated, the first Intel microprocessor was introduced. It apparently contained 2,300 transistors (the little semiconductor devices that switch between one and zero). That was a remarkable achievement at the time, but today the latest Apple M1 Max chip has more than 50 BILLION transistors inside: an increase of seven orders of magnitude.

When one looks at the work that is underway in almost all areas of energy production, delivery and consumption, it is not hard to become far more optimistic than we have been in the past. We must focus on a clear target and develop strategies that will achieve our goals and put aside the divisions and disputes about what is the cleanest option. It may be better to pick a suboptimal choice that can be achieved quickly than to argue over the best solution and achieve little in a reasonable time.

This is an important issue, and we all need to participate. There are so many opportunities in so many aspects of energy production, delivery and use, that roles exist for everyone. Innovation will be key to solving our climate crisis, and I believe that the Generac Grid Services culture strongly encourages and promotes individual innovation. Even those that cannot be innovators can fulfill an equally important role by being an implementer. There is a lot to be done in a short time, which is a momentous task but can provide fun and interesting challenges for every member of our Generac Grid Services team.

Author: lmmetcalfe

Elephants in the Room

I read a recent paper published by JP Morgan on the Energy Transition[1], and the theme addressed issues that were Elephants in the Room. The graph below, taken from that paper shows the relative changes that are taking place as new technology is implemented, the magnitude is addressed and just how much more will be needed.

The public has not found the same incentive to clean energy that has occurred with other technologies. The graph shows the rapid increase in the application of technology in other areas, including smart phones, high speed internet access and e-commerce. At the absolute bottom right corner of the chart is the share of total energy provided by wind and solar, as well as the overall share of EVs on the planet. This is both a concern and may be somewhat may be somewhat disheartening. In looking at the floods that are occurring in Australia, as well as wildfires that seem to occur in many areas, it is hard to understand why there has not been a far greater reaction to address energy and climate related emergencies. Meanwhile we see that more than 70% of new vehicles are either trucks or SUVs—a strong indication of a reluctance to accept a climate change and a changing world.

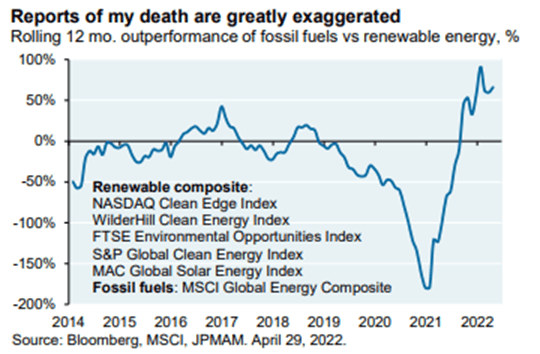

A subsequent graph in the same paper showed a ratio in the stocks of clean energy vs oil and gas companies. This appears to reflect is a transition that is taking place with some of the oil and gas companies. European companies (Shell, Total and BP) appear to be in the process of converting from Oil and Gas to energy companies and the results are interesting. Shell has announced plans to be a major electrical utility and made significant advances in EV charging, as well as clean electricity sales. Transitions of this type reflect a realization of reality and a commitment of major industries to change course.

The Elephant in the Room Remains

It is becoming apparent that this fight is going to need support on many fronts. The share of total energy provided by wind and solar is still at near 5%, with little chance of clean energy reaching the required target of 100% in about 28 years. As mentioned in a previous note, we need to work to improve efficiency, reduce use and to use clean fuels. This all appears to lead to the electrification of most, if not all energy demand. The most important missing piece is support from consumers. The paper went on to describe several of the elephant issues, all need to be considered carefully:

- The costs for wind and solar generation have fallen dramatically, and enthusiasts claim that victory is near. But to utilize this form of generation, other factors need to be included. Storage to smooth an intermittent supply is required, and there are two extremes here. Where there is hydro generation available, the storage may be easily captured, at low or even no cost. At the other extreme is the use of batteries for longer term storage. While battery storage costs have declined dramatically, the potential need to store energy for more than a few days, becomes a large cost and could drive prices for electricity up significantly.

- The reduction of fossil fuel in electrical generation is often the stated target, but the principle uses for fossil fuels are generally for heating, transportation, or industry, and these would all be new loads for the electrical grid. Reducing existing fossil fuel use for electrical generation, while a significant achievement, is a small start. We will potentially need 3-4 times the existing electrical supply. The big challenges will be to provide heat and energy for industrial use. Transportation, while a problem, is less critical because the efficiency of existing internal combustion engine powered vehicles is so low that a transition to electricity will reduce energy use to less than 25% of existing use for similar vehicles. Emission reductions for a transition to EVs are large, but the energy consumption is relatively small. A 10 year transition of all existing personal vehicles powered by fossil fuels to electricity would increase the total electrical demand by less than 2% annually, a growth rate that is far less than what was normal a few years ago. This transition, with low electrical needs and a large impact on emissions NEEDS TO BE A PRIORITY.

- The most challenging issue is the divide between the developed and developing world. In the last 20 years, much of the heavy industry that creates the largest share of emissions has moved to developing countries. To compound this issue, people in these countries earn far less money that people in North America or Europe. Any major increase in energy costs could easily result in the starvation of many people living at near poverty levels. This will need careful consideration by the global community.

If these challenges are not sufficient to bring focus to the issue, the recent war in Ukraine has caused massive upheaval in Europe, as much of their oil and gas came from Russia. That will soon be stopped. Compounding this issue, Norway Oil and Gas workers closed their fields because of a strike by workers, but the Norwegian Government ended the strike. All of this has resulted in dramatic increases in energy costs everywhere.

Opportunity Is in Chaos

The existing situation is chaotic, but amidst the chaos, there may be opportunity. One may expect that the threat of long term increases in fossil fuel prices will lead to countermeasures among users that will reduce use, be more efficient or switch to cheaper fuels. All of this will hopefully be consistent with the target of reducing emissions. We can now hope that the prices for storage, clean generation or energy efficiency devices such as EVs do not inflate rapidly. We live in a period that has a need for rapid change, and perhaps the crisis that we are witnessing in our energy supply may be a ticket for real progress.

Megatrends in the Electric System

The electric grid is undergoing rapid modifications, driven largely by the needs to address climate change. The consumption of fossil fuels needs to be reduced dramatically in a short time. The structure of the existing energy system is becoming inefficient and ineffective, meanwhile the challenges in the coming transition may be a lot larger than the industry and consumers anticipated. Three key issues are in dire need of attention to usher in a smooth transition—each of these factors will play major roles in what lies ahead:

- Overall capacity AND energy needs

- Dispatchable and undispatchable resources

- Energy-constrained and power-constrained resources

Reliance on Fossil Fuels

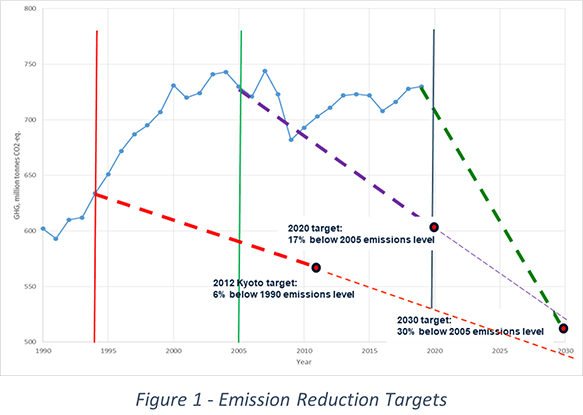

i Fossil fuels (liquid petroleum products, natural gas and coal) have been large, inexpensive sources of energy. The first Conference of Parties (COP) Conference, held in Brazil in 1992, recognized the need to reduce emissions. At that time, 87% of global energy was provided by the combustion of fossil fuels. In 2020, after 28 years of initiatives, target setting and talking, 83% of global energy is still reliant on fossil fuel. Even Germany, with large investments in renewable energy, reduced reliance only to 78% of its total energy supply. Figure 1 shows targets set by Kyoto, Paris and now the COP Conference held last year in Scotland. Progress is minimal, and each target shows a steeper requirement to achieve the proposed target. Currently used concepts are failing to meet the projected and agreed upon targets. There must be a better way forward.

Two of the key issues that needs to change is efficiency and demand. But there are reasons for this lack of focus—energy is too cheap! People complaining about the cost of gasoline will need to stop and look at a bigger picture. A healthy young man, with a shovel can do about 2-3 kWh of work in an 8-hour day. At normal electrical utility prices, that amount of work would be worth less than $0.40. It is suddenly not hard to understand why waste and efficiency have not received attention, which will be required to usher in this energy transition.

Energy Needs

The electric grid seems to be identified as the key system to displace all uses of fossil fuel, but the challenges associated with such a catch-all are significant. Currently, US electricity supply delivers only 19% of the total energy needs of users. Natural gas delivers 29%, oil delivers 51% and coal delivers 2% (not including coal used to generate electricity). Over 80% of energy delivered is based on the use of fossil fuels, and even if the existing electric system can be modified to deliver all “clean” energy, the physical changes to increase delivery from 19% to 100% by the electric grid is more than a challenge if current methods are used. However, there may be opportunities to absorb more capacity into the electric grid than what has been possible in the past. Communications, controls and technology can provide solutions previously not considered. There are other high level factors in the sources of energy that also need to be considered. Most available generation falls into one of two general subcategories:

- Energy-constrained resources deliver a limited amount of energy over a period. A hydro generating source is an example of an energy constrained resource. The maximum energy that can be generated in a year is based on the total flow of water available for generation in that year.

- Capacity-constrained resources deliver a fixed peak amount of power with no limits on the total energy that can be delivered. Examples are coal fired generators, gas turbines or nuclear-powered generators. These stations are power constrained, energy, limited by the fuel supply.

Much of the Canadian grid, powered by hydro facilities, is energy constrained, while much of the US, powered with coal fired steam turbines and nuclear-powered generation is capacity constrained. What is significant in the US is the fact that any large-scale conversion to renewable capacity (wind and solar) will displace capacity constrained generation with energy constrained sources. This transition will have significant effects on grid operations.

One often hears that hydro-plants have the same annual capacity factors as many of the wind turbines and as a result the wind turbines can be used in the same way as hydro is used. That is not quite what happens. Wind is intermittent and is an excellent source when the wind is blowing. Hydro is capacity limited, but may be sized to meet peak capacity needs, and cannot run for extended periods at maximum as there will be insufficient water supply.

Another factor that must be considered is if the generating source can be dispatched as needed. The hydro generation can be started and stopped when required, and typically, the only constraint is the availability of water. If there is water available in the reservoir, the plant can be run on demand. Wind is quite different. It is available only when there is wind. The two are vastly different. Ironically, nuclear generation is quite different, as it is capacity-constrained, but it is essentially non dispatchable. A nuclear plant is typically used for base loads. The reactor output is not easily altered, but the generator output can be changed simply by reducing the flow of steam coming from the reactor to power the generator turbine. In this case, source steam is fixed, but steam used may vary, resulting in a discharge of excess steam to waste. While highly inefficient, this method has been used.

Utilities in North America are all different, some based largely on energy-constrained resources (Canada), and some based on capacity-constrained resources (most of the US). Many of the utilities in the Pacific Northwest are hydro based and are energy constrained. Adding intermittent generation capacity such as wind or solar to these systems may result in different approaches. As these resources are increasing, the concepts used for operations for more than 100 years are often inadequate to address the growing needs of the new paradigm.

Unlike the natural gas system, the electrical grid has a significant constraint that creates a challenge in the operation. The natural gas system has a structure of pipelines that collect the gas at wellheads and delivers it to customers. The main trunk lines operate at a high pressure, and the actual pressure may vary. At any given time, there is a large quantity of gas in the pipeline, and if the line pressure is increased by 20%, the quantity of gas in the line increases by 20%. This is real storage that is often used. I recall a pipeline failure, when I was living in Toronto. The single TransCanada Pipeline gas system from Alberta to Toronto ruptured and was shut down in Northern Ontario. The winter weather was cold, and I was expecting a serious problem. Would we have to evacuate the city? After about 3 days, my curiosity got the best of me and I called an engineer friend at the company that ran the pipeline. “No problem” he said. “We will have the line fixed in 5 days, and there is enough gas in the line to keep us all warm for almost 2 weeks.” That was remarkable. At the sending end, they can pump gas into the pipe, and should they wish to pump more, they simply allow the pressure to go up. It is simple and effective.

The electric grid is different. There is no storage, so the utility has adjusted generation continuously for more than 100 years to maintain the balance between supply and demand. This was a relatively simple task, because any imbalance resulted in a change in the system frequency. Old electric clocks would run fast or slow if the frequency was above or below the nominal level. The generators making the electric power would all speed up or slow down together if the load was less or more than the supply. Utilities relied on speed governors on generators to maintain system frequency at a constant level, and this matched the supply with the demand. It worked for more than 100 years.

The old system worked well but was not efficient. In recent years, it has been shown that adjusting load instead of generation was a more efficient means of achieving the same result. At the turn of the century, Enbala (now Generac Grid Services) was a pioneer in the use of managed demand to provide balance, and the company name Enbala reflects energy balance. (It should have been Power Balance, but most agreed that Enbala sounded better than Powbala.) We have a granted patent on the method that we pioneered.

The addition of intermittent generation, that is displacing much of the older capacity constrained generation has brought a host of new issues, and the change has only started. Storage is playing a huge role, converting the intermittent resources into more conventional energy constrained resources. The maximum energy use is based on the amount of sunshine or wind that is available, and no amount of cash will purchase more fuel to increase the output. Utilities that have survived with capacity constrained supplies are suddenly finding new and different challenges in their operations. Utilities with hydro generation (US Pacific Northwest, and much of Canada) have learned that their hydro facilities may have the ability to storage energy efficiently, so they are finding opportunities to sell storage—taking afternoon surplus solar capacity at low or even negative prices, only to sell it back at higher prices a few hours later.

These are the basic principles of the transition that is starting and will have significant impacts on operations in the future. Many changes will be needed to optimize, and many new opportunities will exist for new concepts. We now have low-cost communications that can provide services, and new technologies in protection and control will provide many new ways of doing much more. Generac Grid Services is striving to position itself to be the leader in this area, as the rewards will be potentially large. It was heartwarming last week, to see an investment house see the same potential and recommend the company stock to investors. ii

i Clean Energy Pathways to meet British Columbia’s Decarbonization Targets; Clean Energy Research Centre (CERC), University of British Columbia January 2022, Part 1

ii https://www.cnbc.com/2022/06/02/ubs-says-generac-is-a-top-pick-with-more-than-80percent-upside.html

Urgent Distribution Protection System Upgrades Are Needed

A recent article in Linkedin claimed that in the last few months solar and wind has provided about 14% of total US electrical energy. That seemed large until one realized that the electric grid provides only 20% of all energy needed. The percent of total energy provided by wind and solar is less than 3% and getting from 3% to 100% will be a huge challenge. It is unlikely that the industry can grow wind and solar resources to achieve net-zero emissions by 2050 per US policy, which is essential to combat climate change and keep global temperatures increases below 1.5°C.

To get to net-zero many issues need to be carefully considered. One leading issue is the electric distriution grid protection or the ability to identify and isolate faults on the system. These systems have the potential to cause chaos as we trend toward clean energy. The electric grid is a collection of wires, cables, towers and poles. The transmission grid is a real grid that can send power between two points in either direction. It is possible to put power into the grid in one location and take it out in several other locations far away.

The distribution system is not a grid at all. It is designed to deliver power from a substation to users. The system was never intended to deliver power from users to a substation. This seems strange, as the two systems are made from the same components that transmission systems use to deliver power in either direction.

Some Distribution Infrastructure Is 100 Plus Years Old and Potentially Dangerous

When electric utilities started, with Thomas Edison in 1883, there were lines that went from the generating station on Pearl St in New York to Edison’s customers. As the systems grew, these distribution systems were built in many cities, and today, some of the older cities have operating distribution systems installed more than 100 years ago. These systems were cutting edge when installed, but in many cases, they have remained in a similar state since installation. in 2021, one of my students brought a piece of a cable that had just been taken out of service from a local distribution system in a small town. The conductor was strands of copper wire wrapped around a hemp rope used to carry the overall weight of the conductor. That cable was installed more than 100 years ago and was replaced recently.

A Transmission Protection System Is Used to Identify and Isolate Faults

Transmission systems have experienced a different history and the systems are more modern and sophisticated. In the 1950s, high voltage lines were generally either 138 kV or 230 kV. Growth was rapid and energy delivered doubled every 10 years. At any time in the mid-century, half of the transmission system was less than 10 years old. Today the backbone voltages for transmission range between 500 kV and 735 kV.

A transmission protection system is used to identify and isolate faults. A lightning strike that hits a tower and causes a flashover fault must be isolated within a few cycles to maintain system stability. The protection systems located in substations monitor line voltages and currents and can detect even small faults rapidly. These systems can identify line and the direction of where the fault is located. The actual fault may be on a given line, or on a line that is connected to the next substation in the grid. The protection system needs to be able to locate the fault and clear it rapidly.

Communications between line ends are the key tool to doing this well. If there is a fault that appears to be on one line, the system will send a signal to protection systems at the other end of the same line, and if the other end sees a fault that is toward the initial station, both ends of the line are tripped immediately. This system has worked well but has been dramatically helped with the introduction of fibre optics.

Innovative Maintenance Systems Changed the Monitoring Game

Early in my career, utilities built dedicated microwave radio systems that provided communications for transmission system protection. These systems were costly to install and maintain. The sites were often on mountain tops, with heavy loads of snow in winter, requiring cable cars, snow cats, or helicopters for maintenance. Fibre optic technologies have totally changed these systems. Our transmission grid is now well protected with sophisticated protection and control systems. Today, our transmission grid is designed and operated to allow faults to occur with no loss of service. There is always another path available and in operation that can seamlessly replace any single loss.

Distribution Systems Need Advanced Systems

Distribution facilities need protection system advance. Existing distribution protection is almost the opposite of the transmission protection, based largely on concepts that have been in use for 100 years. Most distribution is either designed or operated in a radial fashion. A failure of any single feeder may result in a power loss for everyone on the entire length of that feeder. Faults are detected with crude overcurrent systems that operate in almost identical fashion to a household fuse or circuit breaker. There have been some advances over the years, but the principles remain largely unchanged.

The addition of renewable energy sources or storage via distributed energy resources (DER) systems are causing real strain for the utility protection engineers. If a distribution feeder is long and has enough DER devices connected that can provide the current drawn by a fault further down the line, the substation may not even detect the fault and the line may remain in service, without isolating the fault, becoming a fire hazard. Some utilities have been shutting down distribution lines during periods of heat and wind. The presence of a high penetration of DER facilities may exacerbate this situation.

To combat these pressures and needs, it is time to migrate some of the options that have been successful in protecting the transmission grid. Policy may require a module in every DER site that can detect a fault and communicate back to the substation protection system—and there may be another hurdle.

Protection engineers are conservative and careful. They are unlikely to accept a DER vendor giving signals to the protection system, so a module created by the same group that designed the protection system will likely need to be installed with the DER system. Communications are available at low cost through internet or cellular systems. Unlike the transmission system that need to clear faults in less than 0.1 second, a distribution fault that is cleared in several times than length of time would likely be ample.

The world around the power grid has advanced dramatically in the last 100 years. Transmission systems have grown with these advances but the distribution system, to a large extent, remains locked in the past. There is almost certainly a big opportunity for people with systems to advance distribution protection to advance, allowing much higher penetration of DER facilities in what will soon be Grid 2.0.

Grid Orchestration Technology Can Solve Challenges from a Changing Grid

The electric grid consists of two significant and highly different sections: Transmission and distribution (T&D). The transmission system is relatively modern and efficient, but the distribution system is aging and will require considerable changes to modernize.

T&D Systems Are in Highly Disparate Stages

Prior to about 1980, transmission needs grew at about 7% annually, doubling in capacity every 10 years. At any given time, half of the transmission system was less than 10 years old. Voltages increased, and technology was added to protection systems to create a high-voltage network that can deliver power in almost any direction where there is available capacity. Today’s transmission system is relatively sophisticated and is well-optimized with the large generation facilities. Control centres have the capability to fully monitor and control these systems, while protection systems monitor operations and provide capability to identify and isolate system faults—often within less than a few electrical cycles. The ability to rapidly clear faults has allowed an increase in delivery capacity of the system and protection systems have become an important part of grid operations.

The distribution system, for the most part, is in almost the exact opposite position as the transmission system. Distribution delivers power to customers from local substations. Typical voltages for distribution are less than 44 kV. Large industrial customers may be connected directly to the transmission system, while commercial and smaller industrial customers may be connected at the primary distribution levels. Residential customers are typically connected at 120 V, 208 V or 240 V. These low voltages are created with local transformers that provide capacity for a few homes or small stores. Communications within the distribution system has been minimal until the recent implementation of smart meter technology. Additional barriers exist at this level because even these systems generally serve the billing departments before operations receive needed data. Fault clearing has generally been based on overcurrent measurements taken at the substation supplying the distribution feeder. This form of protection operates much like a fuse or a home circuit breaker. It is crude setup and can be a slow means of clearing faults that occur.

T&D Approach Modernization from Opposite Sides of the Table

The transmission system is designed for gradual maintenance and updates. The system is generally capable of delivering power between any two points on the grid (in either direction) with little need to take control action—other than to ensure that no part of the system is overloaded. The National Electricity Reliability Corporation (NERC) requires that the system be operated with reserve capacity to ensure that any single contingency loss will not lead to a large-scale collapse or failure. Operations planners study system conditions prior to taking any line out of service for maintenance. While the single line removal may have no impact, these studies ensure that a subsequent fault during a maintenance outage on a line does not lead to a significant problem. The math behind this type of operation is complex but it is important in maintaining system reliability.

The distribution system, however, is not as nimble. It is designed to deliver the expected peak power needed from a substation to all customers connected to the feeder. Any injection of power from distributed generation or storage systems along the feeder may impair existing operations in several ways and ultimately increase risk for the utility:

- Loss of power: Solar inverters are expected to shut down if there is a loss of power from the substation. This is generally the result of a loss of reactive power delivered by the substation causing inverters to stop operations. Such an event is an inherent characteristic of many inverters—they will not operate without a local supply of AC power connected. Inverters that are also used for backup supply during outages may not operate this way. Some utilities have set conservative standards limiting the capacity of connected inverters to significantly less than the minimum expected 24-hour demand from customer loads. This concept ensures that the loss of substation capacity will result in a collapse of the inverters that are operating; however, this concept also severely restricts the distributed capacity that can be connected on a feeder.

- Fires from reactive power: Fires are a threat to the utility. A generator on a line with many solar systems may supply enough reactive power for the inverters to maintain operations even if the line is tripped at the substation. In this case the fault may not be isolated, potentially causing local fires.

- Fires from distributed energy resources (DER): DER are increasing, which could also cause fire dangers. The problem may be that fault current is supplied by the distributed generation or storage, and the substation overcurrent relays will simply not see the fault, again raising the risk of fires at the fault site.

- Safety: The fourth and perhaps most difficult issue is one of safety. A line may be tripped of at the substation due to a fault, but of the distributed systems might continue to power the feeder, even at a reduced level. Power line technicians dispatched to perform corrective repairs could easily be at risk.

Utilities Hedge Against Distribution System-Related Risks

Some utilities are faced with high penetrations of distributed generation and storage have resorted to shutting power off to entire areas during periods when fire risks are severe and winds are strong. With increasing use of distributed generation, these problems must be solved with solutions that provide a safe operating state, regardless of local wind and weather. Protection systems will be required to address the changing environment. Communications between DER sites and the substation will also be needed. In the past, such a communications system was expensive, but with modern technology, secure communications can be implemented at a cost-effective price.

Generac Grid Services’ Technology Can Help

The key to resiliency while keeping fire risk low is grid orchestration technology. Utilities should equip distributed sources, either generation or storage that have the capacity to provide either voltage or power support to the grid, with systems that can identify nearby fault conditions while relaying information to substations in a form that can be applied directly. There are a few companies that manufacture and supply protection systems for utilities that have shown real leadership in this area.

In several columns, I have addressed the strong need for partnerships between utilities, companies providing optimization and control, and utility customers. In this case, the opportunity for partnerships extends to companies that provide innovative protection systems for the future. The electric grid is the largest system on the planet, and in the past, the subsections of the grid have been able to operate almost independent of the others. With the rapid growth of distributed generation, storage, and demand management, time will soon mandate the need for smart partnerships that can be profitable for all participants.

Sanctions Spur Time for Innovation

Geopolitical events are accelerating a state of rapid change for the energy industry. Prices for fossil fuels are likely to rise in the near term as fallout from Russia’s invasion of Ukraine, resulting in an accelerated transition to electricity to replace and reduce fossil fuel use. The existing electric grid is relatively small, delivering only about 20% of total energy. Expanding the electric grid will require considerable time, as large T&D projects may require more than 20 years to complete.

Reducing GHG Doesn’t Always Mean a Switch to Renewables

Generally, the industry suggests switching from fossil fuels to renewable resources. The real issue is emissions, which can also be addressed by reducing demand or increasing the efficiency of use. The average efficiency in the delivery of useful work from primary energy is less than 35%, which is one actionable opportunity to reduce emissions in the near term.

Electric utilities have found a transition to renewable supply to be challenging. The target, to replace coal fired electricity generation has been largely achieved through the use of natural gas. While it may seem counterproductive to replace one form of fossil fuel with another, the emission reduction resulting from a transition from coal to natural gas is large. Coal produces about double the emissions produced by natural gas for the same energy. In addition, natural gas combined cycle plants are twice as efficient as the coal fired generation that they replace. Emissions may be reduced up to 75% using this technology—an excellent example of reducing emissions by increasing efficiency. Best of all, the technology used is off the shelf.

The Grid Should Take Lessons from Telcos for Grid Modernization

Prior to about 1982, growth in electrical energy use was 6-8% annually, doubling every 10 years. Utility companies became accustomed to this rate of growth. The T&D and generation systems grew, using new technology. At any time prior to 1982, half of the existing generation and transmission system was less than 10 years old. In 2022, more than 70% of T&D lines are aging out of commission.

One key technology that advanced transmission protection systems has been the availability of telecommunications. These systems made it possible to identify and isolate faults quickly, in order to maintain system stability. The transmission grid has become a system that can reliably and efficiently deliver energy and capacity in either direction between any two connected points. As these systems delivered large amounts of energy, the cost of modern telecommunications was relatively small and was easily justified.

The distribution system, however, did not capture similar benefits. Concepts used up to 100 years ago remain common. The protection system to identify and clear faults has been largely based on detecting overcurrent, much like a fuse. The design has created a one-way concept, to deliver power to customers from a central source. This structure has been a major barrier to the use of distributed resources. Today, new technology is available that can easily overcome this issue. Implementation will be essential if the concept of distributed energy is to become widespread.

Smart meters have been an early step in providing distribution system communications. In the past, utilities learned about power loss issues from user calls to say that their lights were out. The smart meters now send a last gasp to report that the power has failed and there will be no further data. Utilities can now identify both the location and scope of losses immediately. This data often provides clues to the problem location. Smart meters deliver large amounts of other useful information. But smart meters are a small start for potential valuable changes.

Cutting-Edge Technology Is a Must

While communications have enabled optimal operation of the transmission system, the distribution system, based on old technology, has not modernized significantly. What opportunities could be available from the distribution system that would help with the transition to a future with lower emissions? Answers to this question would be useful to fully understand and identify opportunities.

A conventional distribution feeder may have load limits based on the length of the feeder. A short feeder, (<1-2 miles) will have a limit based on the conductor or wire used to deliver the power. Above the thermal limit, the wire will become hot. Lines longer than about 2 miles generally limit on low customer voltage. The low voltage limit may be reached with the line current operating at a fraction of the thermal limit of the wire. There may be an opportunity to increase feeder capacity by managing the receiving voltage.

At the same time, the feeder delivers two quantities that are needed by most customers; these are real and reactive power – or Watts and VARs. Watts must come from a generator, but VARs can easily be created anywhere. But both Watts and VARs consume line capacity. Eliminating the delivery of VARs and creating them at the load site is an option to increase the feeder capacity.

VARs can be created by a generators, capacitors, inverters, or specialized solid state devices called Static VAR Compensators (SVCs). The SVC may be a costly but effective solution.

Generac Grid Services learned a lesson some time ago. Devices dedicated to providing one service may be effective, but expensive and not cost effective. A battery used for day/night storage is an example. While costs have fallen dramatically, a battery that captures solar energy by day, to be used during the night delivers a marginal benefit at best. However, if the battery can be used to also mitigate demand peaks for a local utility, or provide fast frequency support, the system becomes fully feasible.

Modernization of the distribution system has many features that may be opportunities for innovative solutions that will address several needs and improve operations at the same time. A few examples may be as follows:

- Systems used for backup sources may be able to be used to deliver reactive power at customer sites–raising feeder capacity and managing local voltage.

- Backup sources may be used to deliver peak capacity reduction for utilities, reducing the need for expensive peak capacity and reducing delivery losses.

- Managing local demand levels to provided needed flexibility for grid management in matching supply and demand.

It is apparent that the small electric grid will need to transition rapidly to become a major means of delivering energy to customers. The available time to achieve this change is too short to allow traditional means of expansion to be used. This is an opportunity for innovation and thought to achieve cost-effective results that can support rapid change.

Reducing Emissions Quickly – Setting Priorities

In 1992, the first COP Conference in Brazil recognized the need to reduce emissions. At the time, 87% of global energy needs were met with fossil fuel. It was agreed that this needed to change dramatically. More recently, it has been suggested that to stay within the target temperature increase, a net-zero target needs to be achieved by 2050. We are now slightly more than half-way through the time between 1992 and 2050, and the reliance on fossil fuels has declined to 83%, but, in fact, more fossil fuel is now used annually than in 1992. There is a common view, that the target is to displace all fossil fuel energy sources with renewable sources. Between 2010 and 2020, the US renewable share (solar and wind), grew from 1.06% to 4.6% of total energy. Renewables will obviously be an important asset in future, but the transition appears to be too slow to meet the net zero target in 2050.

Recent climate events have brought a strong focus on this issue. Net-zero emission is not a final solution but is simply the point at which the weather impacts stop getting worse. We may see increases in weather events until net-zero is achieved, declining only as levels of atmospheric carbon decline very slowly after that time. Without other means, the decline could take more than 1,000 years.

To minimize the total atmospheric carbon when net zero is achieved, immediate changes are needed to reduce emissions. This may be achieved through conservation or by increasing efficiency of energy production, delivery and use. The current efficiency of the energy systems, from primary sources to the delivery of useful work is less than 35%1. Action is important in both the transition to clean energy and in the delivery and use of energy. As these activities are independent, simultaneous improvements may be implemented in both areas.

The electric grid currently delivers about 20% of total energy needs. Electrification of all fossil fuel demands would require a dramatic increase in electric power and energy capacity, and this has been seen to be unfeasible or near impossible in the short term. Efficiency improvements and/or conservation activities can potentially provide immediate emission reductions.

As the electric grid has limited near term capacity for electrification, the ratio of emissions to energy required is a useful tool to be used to establish priorities. A higher ratio would indicate a greater reduction in emissions for the electrical energy required. This would maximize emission reduction results in the near term.

Two examples receiving a lot of attention are the conversion of natural gas heating systems to electric heat pumps, and the conversion of gasoline powered (ICE) vehicles to battery electric vehicles (BEV). Applying a ratio approach to these two examples enables us to see which would have more immediate and greater benefits in reducing emissions without over taxing the electric systems.

In comparing these two conversion strategies, the following assumptions are made:

- Natural gas is assumed to create 55.82 kg/GJ

- 1 L of Gasoline burned will create 2.3 kg of emissions and deliver 0.0342 GJ of energy

- Gas Furnace – Heat pump conversion

- Electricity is assumed to be clean, producing 0 kg/GJ

- Heat pump COP (Coefficient of performance) will be 2-4

- Gas furnace efficiency will be 95%

- ICE Vehicle to BEV Conversion

- Toyota RAV 4 (Best mileage in small SUV Class) EPA rating for combined city/highway use 7.85 L/100 kM

- Tesla Model Y (Long range AWD) EPA rating for combined city/highway use of 27 kWh/100 – 1.88 L/100 kM

A heat pump will deliver 2 – 4 times the amount of heat energy than is consumed by the system. We will consider 2 operating states, one at a COP = 2.0 and one at COP = 4.0. At COP of 2, the ratio is 124, while at COP = 4, the ratio is 248. By comparison, the ratio for a transition from a RAV 4 to a Tesla Model Y is 692. Considering that over 70% of new vehicle sales are for either light trucks or SUVs, and the RAV 4 was identified as one of the most efficient of the SUV vehicles, the transition that achieves maximum emission reductions/electrical energy used is the conversion of electric vehicles to BEV technology. Furthermore, if the average COP over a year for the heat pump is significantly less than 4 (which is likely) the resulting difference is significantly more dramatic. The BEV may give up to almost 4x the emission reduction per kWh of electricity consumed.

BEV technology may have an additional added benefit, and that is a potential to allow the electric utility to manage the timing of charging, either using a rate structure or a real-time control facility to manage the use of home chargers. In using this concept, the utility may be able to increase the capacity use of their delivery system at the same time as managing and reducing system loss.

A careful plan is needed to achieve maximum emission reduction using the available electricity supply. Other alternative conversions may need to be considered but given the common recommendations being the electrification of home heating and personal vehicles, the BEV technology should be considered as a priority, even while acknowledging that both are currently major sources of emissions to the extent that they rely on fossil fuels.

There may be other benefits hidden in this transition. Homeowners may find solar installations may deliver added benefits with the inclusion of battery storage. With this addition, the system can provide additional home backup capacity and time of use cost mitigation. By participating in partnerships with utilities, owners can further benefit by collecting revenue for demand management, frequency regulation and reserves for the utility. The needs for these services will have growing value as the penetration of intermittent generation increases. Generac may improve value with a dual focus, on integrating renewables, and on maximizing the benefits for both homeowners and utilities.

Communications – the Key for Grid 2.0

For many years, people in the utility business have looked at their delivery system as two distinct entities: transmission and distribution. The transmission network is a modern, smart, and optimized system. The transmission grid can generally deliver capacity and energy between any two points, in either direction, with few issues. Utilities routinely trade energy in either direction even where the only interconnection has multiple other utilities in the path between the exporter and importer.

By contrast, the distribution system, often referred to as a “black hole” is seen as a one-way network that has limits and constraints on its ability to deliver energy. In some major cities, parts of the distribution system may be almost 100 years old. Outages often must be reported by someone calling to report that their “lights are out.”

Why does this stark contrast exist in such an important system? What can be done about this – and when might we see real change?

The answer may be simple, but the impacts could be large. Transmission systems started in much the same form as the current distribution system exists. Transmission lines were built to connect generation to loads with little ancillary equipment to protect them.

The photo shows a transmission line that was built in about 1910 that went from a hydro plant on the Kootenay River in British Columbia to gold mines in Rossland, about 32 miles away. The line operated at 32 kV and at that time was claimed to be the longest high voltage line anywhere. Little “roofs” on each pole protected the insulators from snow that someone thought might cause a short circuit in the line.

As the transmission system grew, standards for reliability were established and maintained. Communications soon became a critical factor in system operation. Protective equipment at each end of the line could measure voltages and currents in the line and could detect a fault – directionally on the line. By adding a communications link and comparing similar measurements at both ends of a line, the protection system could identify a faulted line and take it out of service. The communications system between both line ends provided critical information for this process. As the systems advanced, isolating and clearing faults became time sensitive. Leaving a fault on the system for more than a short time could cause the entire system to collapse. Protection systems were designed and implemented to address this issue. Some systems now can identify and clear a single-phase fault. Many utilities found communications to be of such importance that they built and operated dedicated microwave radio systems exclusively for transmission system protection. In recent times, these communications systems have been largely migrated to fiber optics, but this capability has enabled these systems to operate reliably as they do today.

The distribution system was largely ignored in this respect because dedicated communications for small, distributed loads was cost prohibitive. But we are now at a state where this is changing rapidly. The new distribution system may become a system that will have flexibility to do many tasks that have been impossible in the past.

One example of older methods stands out in my memory. Many small distribution substations exist in what is known as a “loop” configuration. They often have a transformer connected directly to an incoming line. If a fault is detected inside the transformer, it is essential that the incoming line be tripped immediately at the sending end to minimize damage to the transformer. In a transmission network, a transfer trip signal would be communicated to the sending end, and that would trip the line circuit breaker, removing power from the faulted transformer. But in older distribution systems, where communication is not available, a large spring-loaded bar may be installed that applies a short circuit on the incoming line. The protection system at the other end sees a line fault and trips the line. Problem solved – in a very crude way.

Recently, the addition of distributed energy sources has caused issues that will drive a dramatic change in the distribution system. These systems have caused problems under the old model, but communications have now advanced to the point that cost-effective measures may easily be undertaken that will bring a full-scale change to the distribution system.

Consider a distribution feeder with many solar-powered sites along the line. Should a fault occur near the remote end of the line, it is possible that the DER sites along the way will supply all fault current, and the substation supplying the feeder may not see the fault at all. This fault would not be cleared, potentially causing real damage – or fires. Utility distribution planners see many similar issues and their only recourse has been to place restrictions on the numbers and capacities of DER facilities on the line. In doing so, they have caused frustration and anger among people with intentions to supply clean energy to the grid. The addition of communications will enable full integration of DER facilities, allowing protection systems to be utilized. This, in turn, should enable maximized use of DER capacity to support a transition to clean energy.

The AMI or smart meter initiative has been an impressive start to enable communications. Technically, a utility can set up a mesh of customer meters in an area where they have direct communications with only a few of the meters. But the meters can interconnect among themselves and provide a full communications system for the utility, reporting on real time demand, voltages, and currents as well as billing data at all customer sites. This form of communication may provide a valuable link for metering, control and protection in future.

Some utilities have purchased cellular spectrum, intending to manage their system on a private network, while others are using a variety of other methods for communications.

The real value of new communications facilities in the distribution system, however, may go well beyond the electric system itself. We have lived more than 100 years with a system designed for the short peak demand capacity that MAY happen at some point in the year. The average demand on the grid is typically about half of the peak. By using communications to manage and optimize the entire system, including demand, the system can operate closer to peak capacity on a continuous basis. This increase in delivery capacity will be a valuable asset for utilities, and it will help to maintain low costs. Generac Grid Services has demonstrated that many load devices can be managed for the grid without impairing the operating needs of the customer. Similar management of voltages can be used to manage and optimize system losses.

The skills being developed within Generac Grid Services will be ideal in building this grid of the future. This opportunity to include customer devices as an integral part of an optimized system, where customers are paid for their contribution, will be a challenge, but a rewarding achievement. The transmission and distribution systems, along with the supply and demand will be operated as a single optimized and seamless system. In such a system, everyone wins. Costs are minimized, and customers become a part of the process to fully optimize operations.

The answer may be simple, but the impacts could be large. Transmission systems started in much the same form as the current distribution system exists. Transmission lines were built to connect generation to loads with little ancillary equipment to protect them.

The Path Ahead May Have a Few Shocks

In a recent speech at an Asia Pacific Conference, Vaclav Smil, a well-known professor and writer of books on energy offered some interesting statistics on progress to address climate change.

Time is short. We are targeting a transition to net-zero emissions by 2050. History has some interesting comparisons:

• It took 100 years for the world to convert from wood to 50% coal

• It took 100 years to move to 40% oil

• It has taken 70 years to go from no gas to 25% natural gas

The first COP conference took place in Brazil in 1992, and at that time the world captured 87% of its primary energy from fossil fuel. That was 29 years ago, and today we are half-way between the Rio COP conference and the target for zero net emission.

The recent COP conference in Glasgow produced some interesting commitments and disagreements. China and India, two of the largest emitters, have committed to achieve net-zero by 2060 and 2070, though in the short term, both continue to burn coal for electricity generation. Coal is one of the single largest sources of emissions, creating more than twice the emissions of natural gas and operating at about half of the efficiency of natural gas combined cycle generation (CCGT) facility. A switch from coal to CCGT could reduce current emissions from electricity generation by almost 75%.

There are some interesting trends that are beginning to emerge. Between 2015 and 2019, total energy in the U.S. increased by 3.1%, and fossil fuel use decreased from 81.6% to 80%. A 27% decrease in coal use has largely been taken up by an 18% increase in natural gas use for generation, so when one factors in the efficiency gains in the use of natural gas generation, it is apparent that most of the transition off coal has been accomplished by a switch to natural gas. Germany has seen similar results. After 20 years of green initiatives, its dependence on fossil fuel remains at 78%, and in the last three years, its use of natural gas for electricity generation has increased by 50%.

It is apparent that despite major investments in solar and wind capacity, the transition to reduce emissions is progressing at a rate that may be too slow to achieve the target of net-zero by 2050. One reason for this is that the transition is not simple, and there are many factors within the existing energy systems that will be a challenge to change.

But another interesting factor has appeared in recent weeks. Countries in the EU that have not supported the German initiative to eliminate nuclear capacity and are continuing to utilize this fuel source have seen significantly better progress on emission reductions than countries that have followed the German initiative. France, which has generated more than 80% of its electricity from nuclear capacity for many years, has requested that Germany agree that nuclear is emission free and therefore should be classified as “green.” Germany has resisted this change, but there may be a shift in policy ahead. The topic was discussed at some length at COP26, and several countries including the U.S., U.K. and Japan supported the French position. There are also significant initiatives to move this activity forward in industry. Rolls Royce in the U.K. has received grants and investments of more than $500 million for development of modular nuclear generation facilities. Canada has ordered a 300 MW modular nuclear from GE/Hitachi to be running in Ontario by 2028, and the U.S. government is promoting nuclear micro reactors. China and India are developing reactors based on thorium, and there are now 22 companies actively working on fusion technology, with promising results. At last, it may not be 50 years away.

What would a mix of renewables and nuclear energy do to the energy grid of today and to the products emerging to manage grid 2.0?

Nuclear energy historically has been unable to provide short-term flexibility, but it can ramp up or down over significant periods of time. Nuclear energy, initially installed in the 1960-80 period, needed storage to capture night surplus capacity to be used during daytime. The capacity was firm but did not change over a single day. Pumped storage was installed in many locations to meet this need. Ironically, the need for storage for nuclear capacity is the exact opposite of what is needed for solar and wind. Nuclear is firm with no ability for short-term flexibility, while solar and wind are intermittent and need short-term storage to make them dispatchable. The potential addition of nuclear capacity to the current mix may have significant benefits as it may well be a complementary resource to intermittent renewables.

There has also been a lot of interest in recent times about the use of green hydrogen and fuel cell technology. This concept relies on the use of surplus electricity, but with the existing amount of renewable capacity, it is difficult to understand where that might have been captured. But with an addition of nuclear capacity, there might be surplus electrical capacity that would enable the hydrogen concepts to thrive.

We live in a time of rapid change. The change is going to be essential if we are to meet the target of zero-net emissions by 2050. There will need to be several important changes take place:

- We are very large consumers of energy, and our total energy system is less than 35% efficient. There is a growing need to improve efficiency, conserve and do more with less energy.

- There will be continued requirements to optimize the entire grid, from generation to loads, and this is an area where Generac Grid Services is a recognized leader.

- There will a fast-growing need to increase the delivery capacity of our existing transmission and distribution systems, and again, Generac is well positioned to be an industry leader in this area. The new PWRgenerator that runs only periodically when needed is an ideal product to start on this new concept.

- There will be a need for direct air carbon capture to reduce the current levels of carbon in the atmosphere and sequester it.

For some, the COP25 conference was seen as a failure to achieve full agreement on some important points, but it has given me real hope and enthusiasm for a future where — with innovation and careful planning that can react quickly to changing objectives — we can achieve the needed results. Generac has a track record and products that position us well. In addition, we have a culture and management team that can accommodate the change and the flexibility that will define the winners in the next few decades.

Grid 2.0 What is it!

Summary

The electric grid is entering a rapid state of transition. The current grid delivers less than 1/5th of the total energy needs of customers and with the shift to clean energy, that share will increase dramatically.

Utility planners are quick to explain that grid design is complex and must meet important criteria:

- Deliver the annual peak demand, with redundancy to allow for the loss of a single critical component at peak demand without an overall failure.

- Supply demand balance must be maintained continuously in real time.

The current grid design has very little available capacity during peak periods.

As fossil fuel loads are electrified, more electrical power and energy will be needed. Estimates suggest that a 3x electrical energy will be required. That will be challenging, as grid additions can be slow and costly to install. New technology to enable this growth will be valuable.

Loss will also be an issue. Average delivery loss in the US is about 7%, and if delivered capacity is doubled, the loss may increase by up to 4x. (Loss increases with the square of electrical current) With current annual US losses valued at $19B, there will be real value in managing and optimizing loss.

Today, Generac has both the technology and the skills to contribute value, both in added capacity/energy delivery and in loss management.

Delivering More Energy through the Existing Grid

The existing grid CAN deliver more energy and more power, but this requires changes in the ways that the system is planned and operated.

The basic grid design, which has existed for more than 100 years, is intended to meet customer demand when and where needed. The utility was designed to deliver power from central generation to loads. Until recently, any thought of distributed generation was simply not considered. The utility was established to meet customer demand, ensuring that the customer always receives quality power with reliability. This concept has two distinct requirements: acceptable quality (voltage, frequency, etc.) and reliability. The system must deliver quality electric power with reserve capacity to allow the loss of a critical transmission or generation element at peak without causing a system collapse.

Grid 2.0 can increase delivery of power and energy while ensuring that these requirements are maintained.

The average load on the grid is typically about 50% of the peak demand. More power could be delivered during off peak periods when there is little or no demand. A combination of load management and distributed storage can meet this need by increasing the average power delivery and storing surplus energy near the load site for later use.

The other issue is voltage management. In many cases, the limited capacity during peak conditions is caused by low voltage at the customer site. This voltage issue can be addressed with dynamic voltage management, another strength that Generac can provide.

Managing Demand

The mandate to “meet customer demand” has been a real challenge in some areas. The utility MUST maintain the supply demand balance on a continuous basis. If you turn on an air conditioner, one or more generators will increase their output levels to meet this capacity change. Consider an industrial arc furnace that can draw large amounts of power for very short periods. The utility must have capacity available to follow these changes continuously. Recently, large capacity solar and wind generation has been added to the grid. These sources have generally not been dispatched and may be intermittent requiring the same dynamic management as volatile loads, adjusting dispatched generation to follow the intermittent generation, in such a way that total demand and generation are continuously balanced. The balancing task has increased with the addition of intermittent generation.

Demand response systems appeared some years ago, specifically aimed at reducing the system demand during the daily peak, and thereby reducing the need to either start peaking generation or to purchase the needed capacity at peak prices.

With the inclusion of large amounts of intermittent generation, the issue has become more challenging, as both the supply and demand capacities are subject to intermittency. As renewable generation increases, and conventional generation decreases, the problem gets exponentially more difficult to manage.

The immediate solution to this is demand management – a step beyond demand response. There are many load devices that can be managed with little no impact on the users’ experience, and these are now being widely used. Systems may pre-heat hot water or pre-cool an air-conditioned building before the peak demand period to reduce the need for peak capacity. Much work has been done to optimize systems that provide this service.

The impact of this action is to reduce peak demand and shift some of the capacity to off peak periods. The immediate effect of this process is a benefit in another way that is less visible. Loss is reduced. The loss in the distribution system is proportional to the square of the current in the line. If one draws power at a constant rate, the loss will be significantly less than if one draws the same amount of energy over a period, but draws zero for half of the time, and double for the other half of the time. Demand management can reduce loss.

Managing Voltage and Loss

Lowering the peak and increasing the capacity factor (average demand/peak demand) makes room to deliver more energy. If the demand can be managed, ideally to have a capacity factor of 1.0, a line that was previously operating at a capacity factor of 0.5 could deliver 2x the energy that was delivered in the past, but this would result in an increase in loss of up to 4x. A distribution line that experienced loss of 4% might experience loss of up to 16% after this increase in capacity factor. Voltage and Loss management can play a large role in this area. Voltage management is the next frontier in Grid 2.0.

When load is increased on distribution systems, the limiting capacity is most often the low voltage limit that is breached as the demand increases. Utilities have used capacitor compensation to address this issue for many years. But in recent times, the capacitor compensation has resulted in a limited ability to connect rooftop solar and other renewable sources to existing distribution feeders because of high voltage issues. This has become a significant issue in Australia, where rooftop solar is popular. The solution is to utilize dynamic voltage control, to manage voltage on a continuous basis, similar to what is currently done in managing demand.

Dynamic voltage control, however, has other features that make it potentially highly valuable. The system can not only increase feeder capacity, and enable much more distributed generation to be connected, it can also manage and optimize loss, reducing it by about 1/3.

Dynamic voltage management has not been extensively used in the past because there was little need; there was almost no distributed generation on the grid. But with the changes that are now well under way, there is a rapidly growing demand for solutions that will enable the connection of more renewable capacity, while managing loss and increasing delivery capacity.

Generac has real strengths in this area, with proprietary control technology, and the use of Generac equipment, to be a strong leader in managing distribution operations.

Grid 2.0 Is TECHNOLOGY

The future grid may require physical additions, but new technology will provide a lower cost path to deliver more power, allowing time to implement longer-term projects for a robust grid that can continue to grow for the future.

Generac has the technology to meet these needs today, and this will be a valuable addition for utilities that are facing the rapid changes that are coming.

You must be logged in to post a comment.